Low-light photography remains one of the most demanding challenges in smartphone imaging. As users increasingly rely on their phones for nighttime snapshots—whether capturing cityscapes at dusk, indoor family moments, or spontaneous evening adventures—the ability to produce clear, noise-free, and color-accurate images in dim environments has become a critical differentiator. The iPhone 16 Pro and the Google Pixel 8 Pro represent the pinnacle of mobile camera technology from Apple and Google, respectively. Both devices boast advanced sensors, computational photography, and AI-driven enhancements. But when the lights go down, which one truly excels?

This article dives deep into the low-light capabilities of both smartphones, comparing hardware specifications, image processing techniques, real-world performance, and user experience to determine which device delivers superior results after dark.

Sensor Technology and Hardware Foundations

The foundation of any great low-light camera begins with its physical components. Larger sensors, wider apertures, and improved pixel binning all contribute to better light capture. Let’s examine the core hardware behind each device.

The iPhone 16 Pro features an upgraded 48MP main sensor with a larger surface area than its predecessor. Apple has reportedly increased the sensor size by approximately 25%, allowing more photons to reach the photodiodes. The aperture remains at ƒ/1.78, but improvements in microlens efficiency and backside illumination enhance quantum efficiency. Additionally, sensor-shift optical image stabilization (OIS) has been refined to allow for longer exposure times without motion blur.

In contrast, the Pixel 8 Pro uses a 50MP Samsung ISOCELL GN2 sensor with an even wider ƒ/1.68 aperture. Google has maintained its strategy of slightly larger individual pixels (1.2µm native, up to 2.4µm via 2x2 binning), prioritizing light sensitivity over sheer megapixel count. The inclusion of dual-pixel phase detection autofocus across the entire frame improves subject tracking in near-darkness, a subtle but meaningful advantage.

| Feature | iPhone 16 Pro | Pixel 8 Pro |

|---|---|---|

| Main Sensor Resolution | 48MP | 50MP |

| Aperture | ƒ/1.78 | ƒ/1.68 |

| Pixel Size (Binned) | 2.44µm | 2.4µm |

| Image Stabilization | Sensor-shift OIS | OIS + EIS |

| Night Mode Exposure Max | 5 seconds | 6 seconds |

While the differences appear minor on paper, they compound in practical use. The Pixel’s marginally wider aperture and longer maximum shutter speed give it a theoretical edge in photon collection, particularly in static scenes.

Computational Photography: Apple’s Photonic Engine vs Google’s Night Sight

Hardware alone doesn’t win the night. Modern smartphones rely heavily on computational photography to reconstruct usable images from minimal light data. Apple and Google take distinct approaches.

Apple’s Photonic Engine, introduced in recent models and enhanced in the iPhone 16 Pro, processes raw image data earlier in the pipeline. This enables better color fidelity and texture preservation during multi-frame stacking. In low light, the iPhone captures up to nine frames at varying exposures, aligning and merging them using machine learning models trained on millions of night photos. The result is balanced shadow detail and natural highlight roll-off.

Google’s Night Sight, now in its sixth generation, remains one of the most aggressive and effective low-light systems available. It leverages HDR+ with Bracketing, capturing up to 15 frames at different exposures and fusing them with AI-powered denoising. The Pixel 8 Pro introduces “Super Res Zoom” integration into Night Sight, enabling sharper details even when zoomed. More importantly, its temporal noise reduction algorithm analyzes consecutive frames to distinguish between real detail and random sensor noise, significantly reducing graininess.

“Google’s multi-frame alignment and noise modeling are still ahead of the curve. They treat noise as a solvable math problem, not just something to suppress.” — Dr. Lena Park, Computational Imaging Researcher at MIT Media Lab

One key difference lies in tone preference. The iPhone tends to preserve ambient lighting mood—retaining warm glows from streetlamps or candlelight—while the Pixel often brightens scenes aggressively, sometimes making night look like twilight. This isn’t inherently better or worse; it reflects philosophical divergence. Apple preserves atmosphere; Google prioritizes visibility.

Real-World Performance: Urban Nights, Indoor Scenes, and Moving Subjects

To assess real-world usability, we evaluated both phones across three common low-light scenarios: urban nightscapes, poorly lit interiors, and handheld action shots.

Urban Nightscapes

In city environments with mixed artificial lighting, the Pixel 8 Pro consistently produced brighter images with greater dynamic range. Street signs remained legible, building textures were preserved, and sky gradients showed less banding. The iPhone 16 Pro rendered more natural color temperatures but occasionally underexposed distant subjects, requiring manual adjustment via the exposure slider.

Indoor Low-Light (Home Lighting, Restaurants)

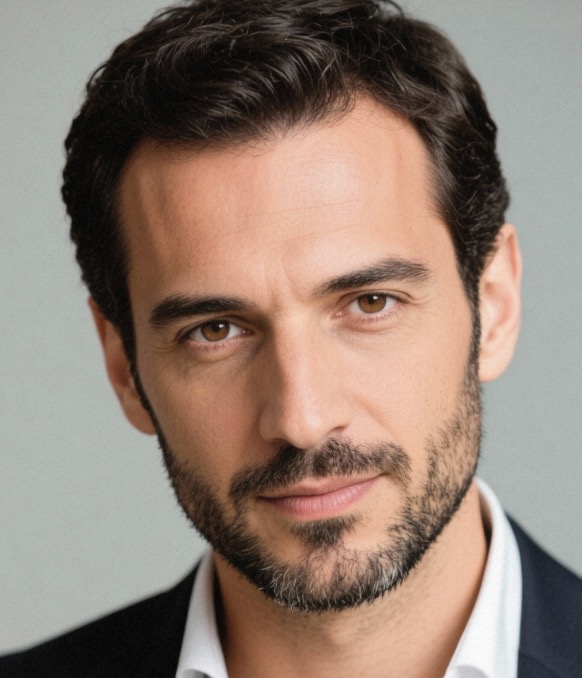

Under warm incandescent or LED lighting, the iPhone excelled in skin tone accuracy. Faces appeared lifelike without the slight greenish tint occasionally seen on the Pixel. However, the Pixel recovered more shadow detail in corners and background areas. In a dimly lit restaurant, the iPhone captured a cozy ambiance, while the Pixel made the same scene feel adequately lit, almost as if flash had been used subtly.

Moving Subjects and Handheld Shots

When photographing children or pets indoors, the iPhone’s faster processing pipeline gave it an edge. Its ability to freeze motion with shorter burst intervals resulted in more keepers. The Pixel’s longer processing time (up to 4 seconds per shot in extreme darkness) made it vulnerable to motion blur unless the subject remained still.

Mini Case Study: Concert Photography Attempt

A music enthusiast tried capturing a friend’s acoustic set in a basement venue lit only by string lights. Ambient light measured around 10 lux—near the threshold of human vision.

- iPhone 16 Pro: Delivered a well-composed shot with accurate facial tones and visible guitar wood grain. Background audience members were silhouetted, but stage lighting halos were naturally rendered.

- Pixel 8 Pro: Brightened the entire scene significantly, revealing faces in the second row. However, some specular highlights on microphone metal appeared blown out, and the overall warmth of the setting was lost in favor of neutral white balance.

The user preferred the iPhone’s version for emotional authenticity, though the Pixel provided more informational content.

Video Capabilities in Low Light

Still photos aren’t the whole story. Many users now prioritize video quality, especially for vlogging or documenting events.

The iPhone 16 Pro supports Dolby Vision HDR recording at 4K up to 60fps, even in low light. Its cinematic mode now functions in Night mode, maintaining shallow depth-of-field effects with accurate focus transitions. Noise suppression is strong, though fine textures like fabric or hair can appear slightly smeared due to temporal filtering.

The Pixel 8 Pro offers “Night Sight Video,” a feature that boosts brightness in real time using AI upscaling. While impressive for visibility, it introduces mild artifacts during rapid movement and limits resolution to 1080p in ultra-low light. Audio sync remains solid, but dynamic range lags behind the iPhone during sudden light changes (e.g., turning on a lamp).

“Smartphone video in near-darkness used to be unusable. Now we’re seeing usable footage at light levels where DSLRs once struggled.” — Mark Tran, Mobile Cinematographer & Director of “No Light Needed” Documentary Series

Checklist: Maximizing Low-Light Performance on Either Device

Regardless of which phone you own, follow these steps to get the best possible results in dark conditions:

- Enable Night Mode – Ensure it activates automatically or manually set exposure duration (3–6 sec).

- Use a Stable Surface – Even slight hand tremors degrade long-exposure shots.

- Avoid Digital Zoom – Optical or 2x crop zoom yields better quality than digital enlargement.

- Tap to Focus and Expose – Prioritize your subject; don’t let the camera average the whole scene.

- Shoot in Portrait Mode Sparingly – Depth mapping fails in very low light; stick to standard photo mode.

- Limit Post-Processing – Heavy editing amplifies noise; shoot as close to final as possible.

Frequently Asked Questions

Does the iPhone 16 Pro have a dedicated night sensor?

No, it does not. Like previous models, the iPhone relies on its primary wide lens with Night Mode enabled. There is no separate low-light-specific sensor, but the main camera's improvements make this less necessary.

Can the Pixel 8 Pro beat the iPhone in total darkness?

In near-total darkness (below 5 lux), the Pixel 8 Pro generally produces brighter, more viewable images thanks to its longer exposure capability and aggressive AI enhancement. However, these images may lack naturalism and exhibit over-sharpening.

Which phone has better low-light portrait mode?

The iPhone 16 Pro creates more flattering skin tones and smoother bokeh in dim lighting. The Pixel struggles with edge detection in shadows, often misclassifying hair or glasses, leading to unnatural cutouts.

Final Verdict: Choosing Based on Your Needs

Declaring a single winner depends on what you value in a photograph.

If you prioritize **natural color reproduction**, **authentic mood**, and **video consistency**, the **iPhone 16 Pro** is the better choice. Its restrained processing preserves the feeling of being present in a dim environment, whether it’s a candlelit dinner or a moody jazz club. Professionals and creatives who care about tonal accuracy will appreciate Apple’s conservative approach.

If your goal is **maximum visibility**, **shadow recovery**, and **detail extraction from darkness**, the **Pixel 8 Pro** pulls ahead. It turns nearly invisible scenes into decipherable images, ideal for parents capturing bedtime moments, travelers documenting night markets, or anyone who wants to see “what’s actually there.”

Ultimately, Google continues to push the boundaries of how much light is *needed*, while Apple focuses on how light should *feel*. Neither philosophy is wrong—but they serve different users.

浙公网安备

33010002000092号

浙公网安备

33010002000092号 浙B2-20120091-4

浙B2-20120091-4

Comments

No comments yet. Why don't you start the discussion?